2 supports plastique Clip'vit+ à clipser pour tringle de vitrage "3 en 1" blanc - MOBOIS - Mr Bricolage

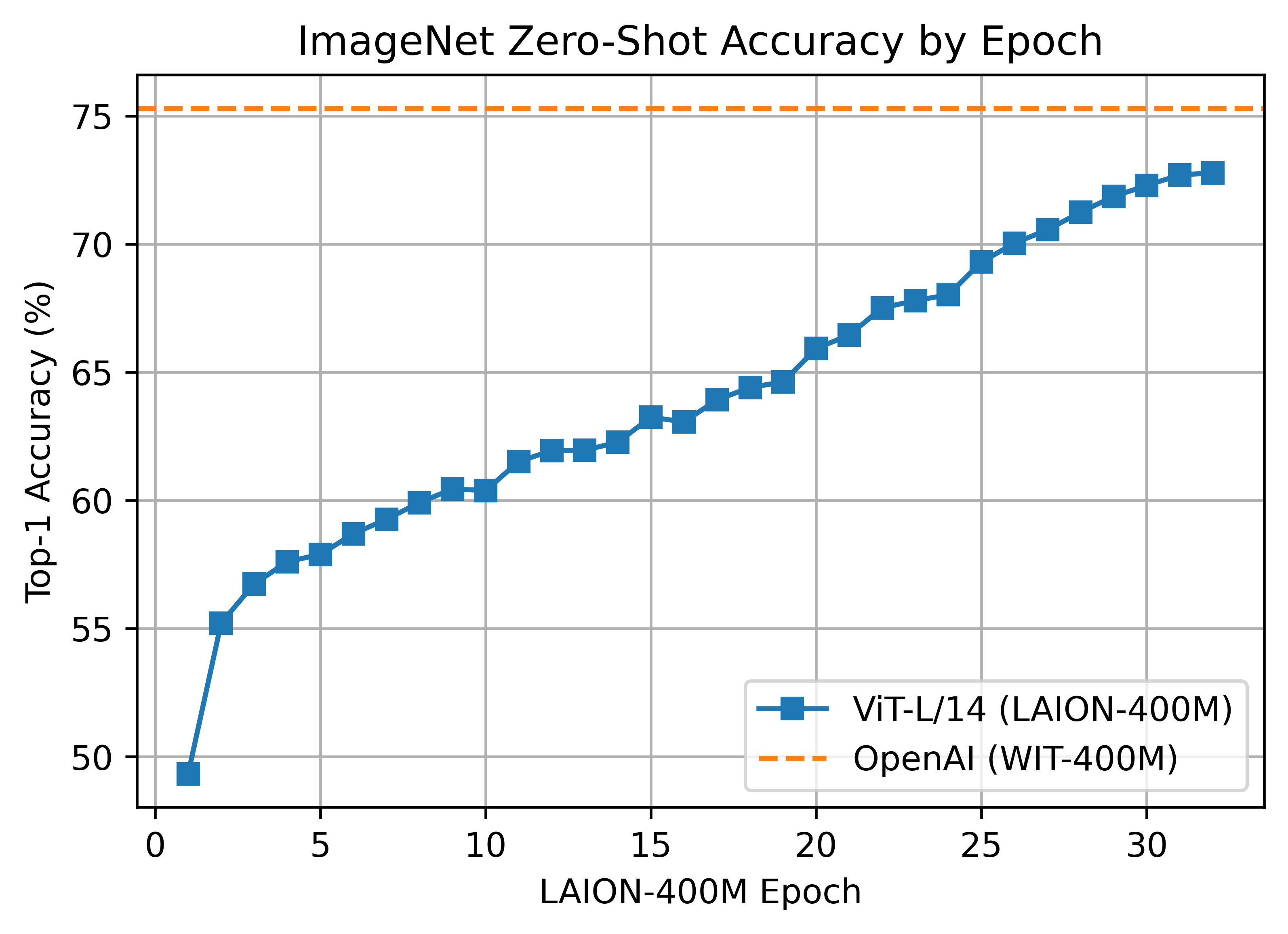

Romain Beaumont on Twitter: "It makes it possible to do multilingual text to image retrieval using an existing knn image index that was build using clip vit-l/14 embeddings . Test it yourself

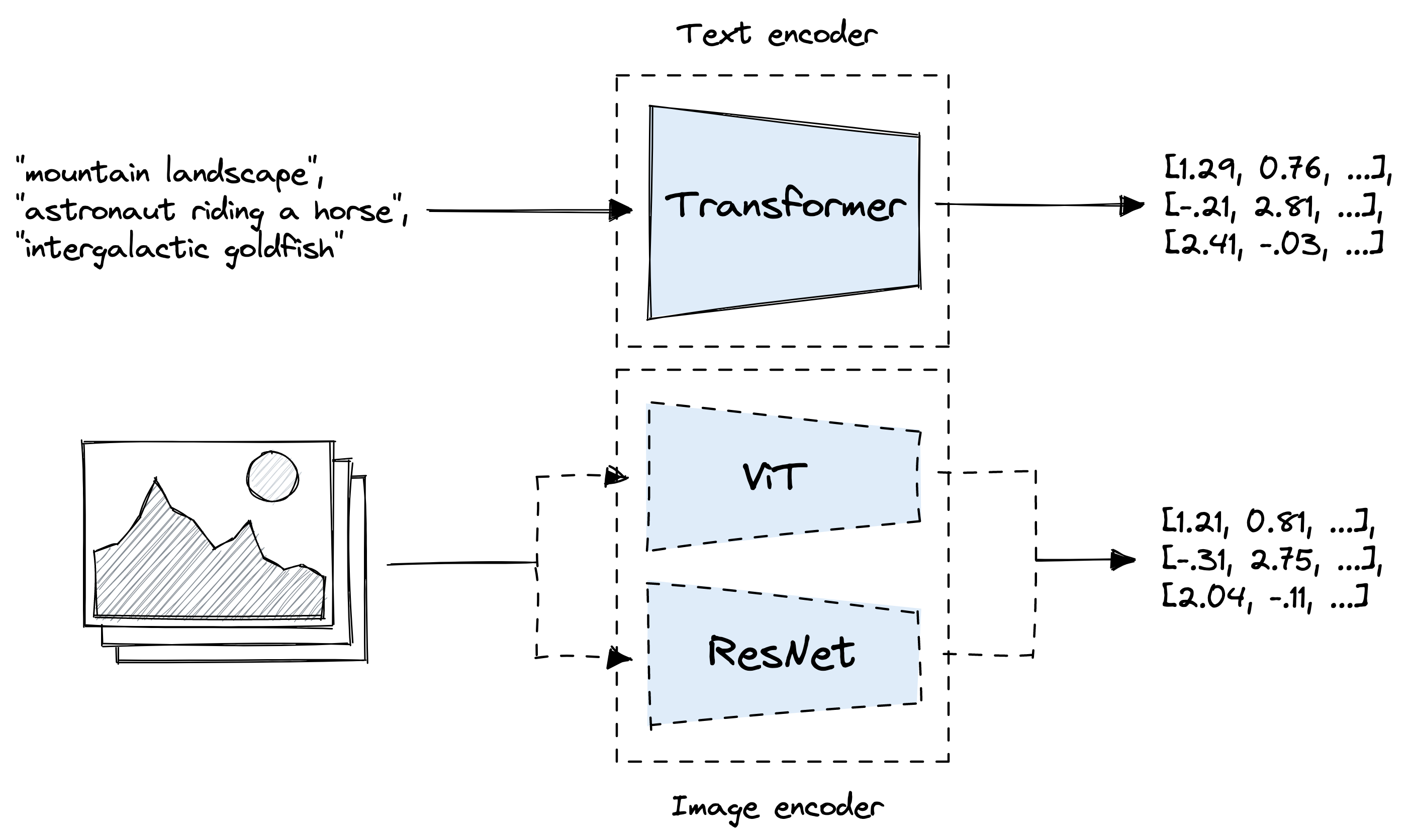

Computer vision transformer models (CLIP, ViT, DeiT) released by Hugging Face - AI News Clips by Morris Lee: News to help your R&D - Medium

multimodal ai art (@multimodalart): "Breaking news: OpenAI open sourced their CLIP ViT-L/14@336px! https://github.com/openai/CLIP/commit/b4ae44927b78d0093b556e3ce43cbdcff422017a I'll hook it soon to many generation systems, stay tuned!" | nitter

Niels Rogge on Twitter: "OWL-ViT by @GoogleAI is now available @huggingface Transformers. The model is a minimal extension of CLIP for zero-shot object detection given text queries. 🤯 🥳 It has impressive